Jan 13, 2020

Over the last 18 months we’ve embarked on a mission to dramatically modernize our development processes. Why? The standard process of development doesn’t align all that well with delivering what people really want. And by people we mean everyone from the people buying the technology to the people consuming digital experiences. People want the experience to work well, every time, 24 hours a day 365 days a year. No technology buyers—from sponsoring executives to marketing practitioners— want to hear that something just released broke, broke something else, or will soon be optimized, etc.

So we set out to improve our development process to improve quality. This has led to heavier use of unit testing and user interface testing in our Sitecore projects. Oshyn believes in test automation as the key to unlocking continuous delivery (CD) for more frequent builds and releases. Without it, it’s impossible to be able to regression test an entire site’s functionality. Without it, you are either:

- Not regression testing the majority of the site OR

- Spending 40-60% of your sprint doing retesting

Automated Testing provides objective proof of the quality of your system and provides managers key metrics to manage quality of the project over time.

We typically provide reports on our projects for two main purposes:

- Development-focused

- Gate/reject builds that don’t pass the test suite

- Scan for vulnerabilities in code

- Scan for code quality and adherence to standards

- Dependency tracking

- Management-focused

- Show increase in tests over time

- Show proof of quality prior to release

- Give managers and content editors information they need to improve both the SEO and performance of the site

Developer-focused Reports

The following developer-focused reports are created by our build process for every build:

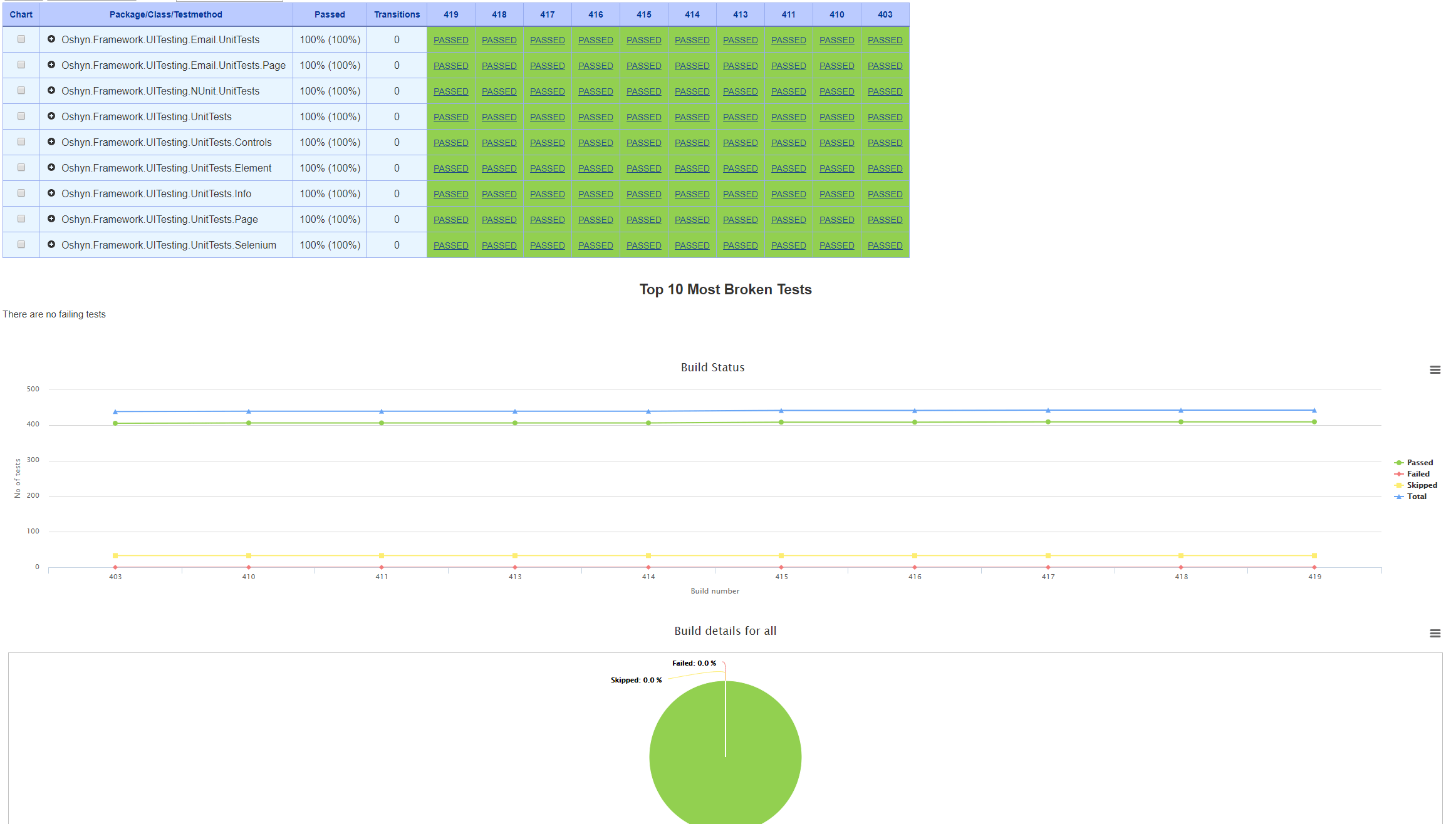

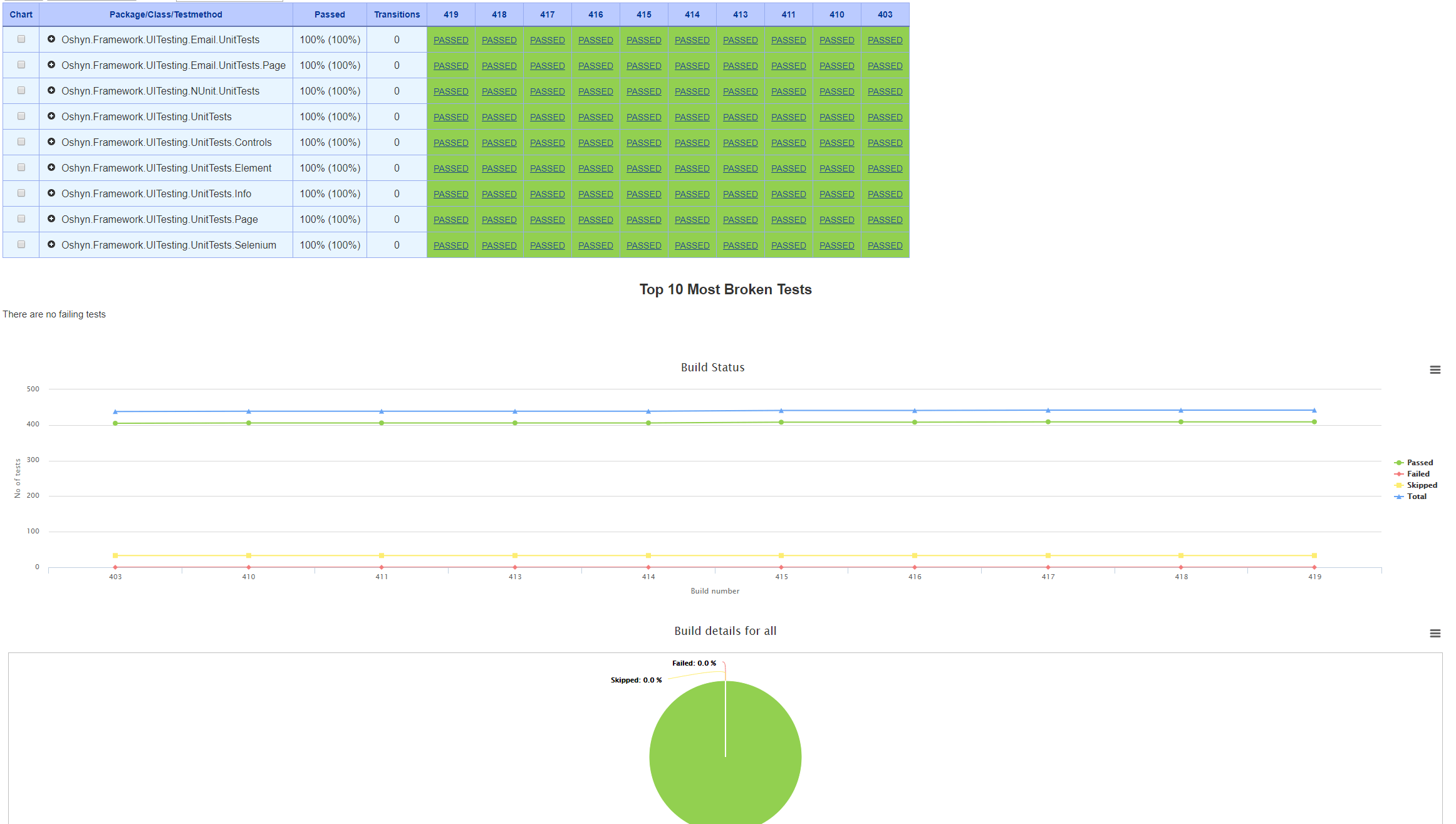

Unit Test Results

Failure will fail the build, meaning the promotion process will be stopped and the deployment won’t happen. Shows which unit tests have failed in the build. Provides historical results across builds.

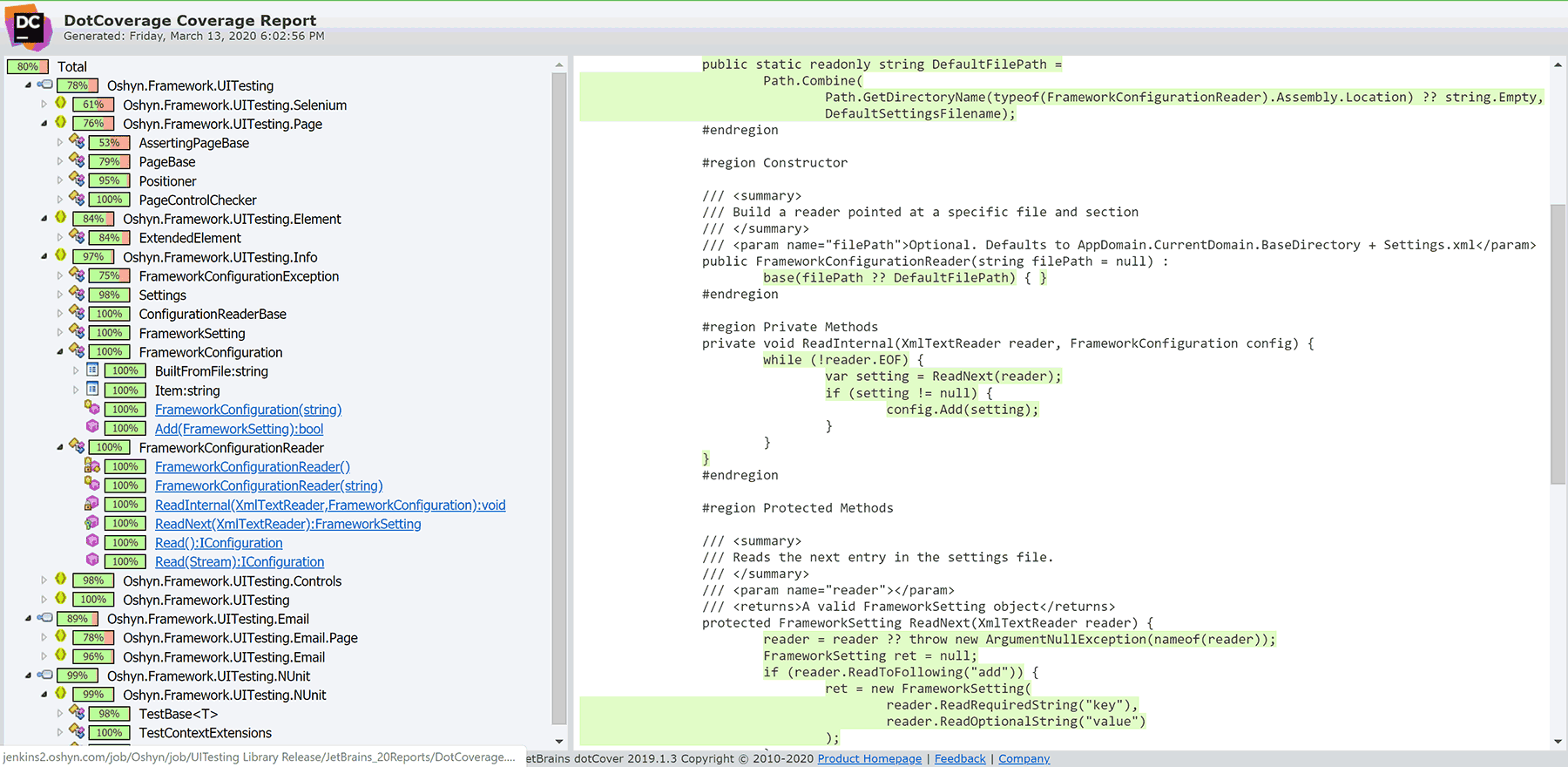

Code Coverage

Shows unit test code coverage across lines of code, methods, classes, namespaces, and entire assemblies. (based on Jetbrains dotCover)

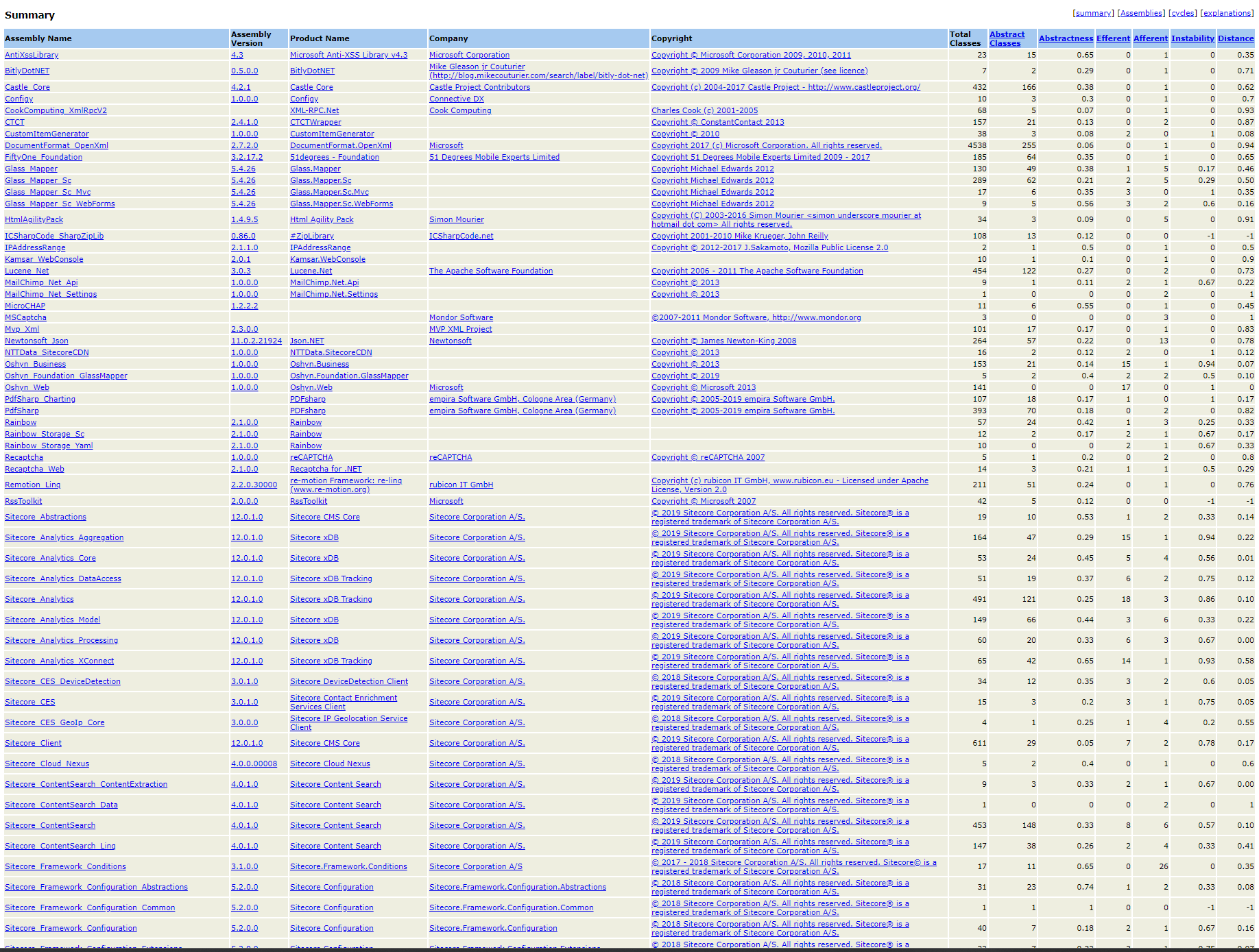

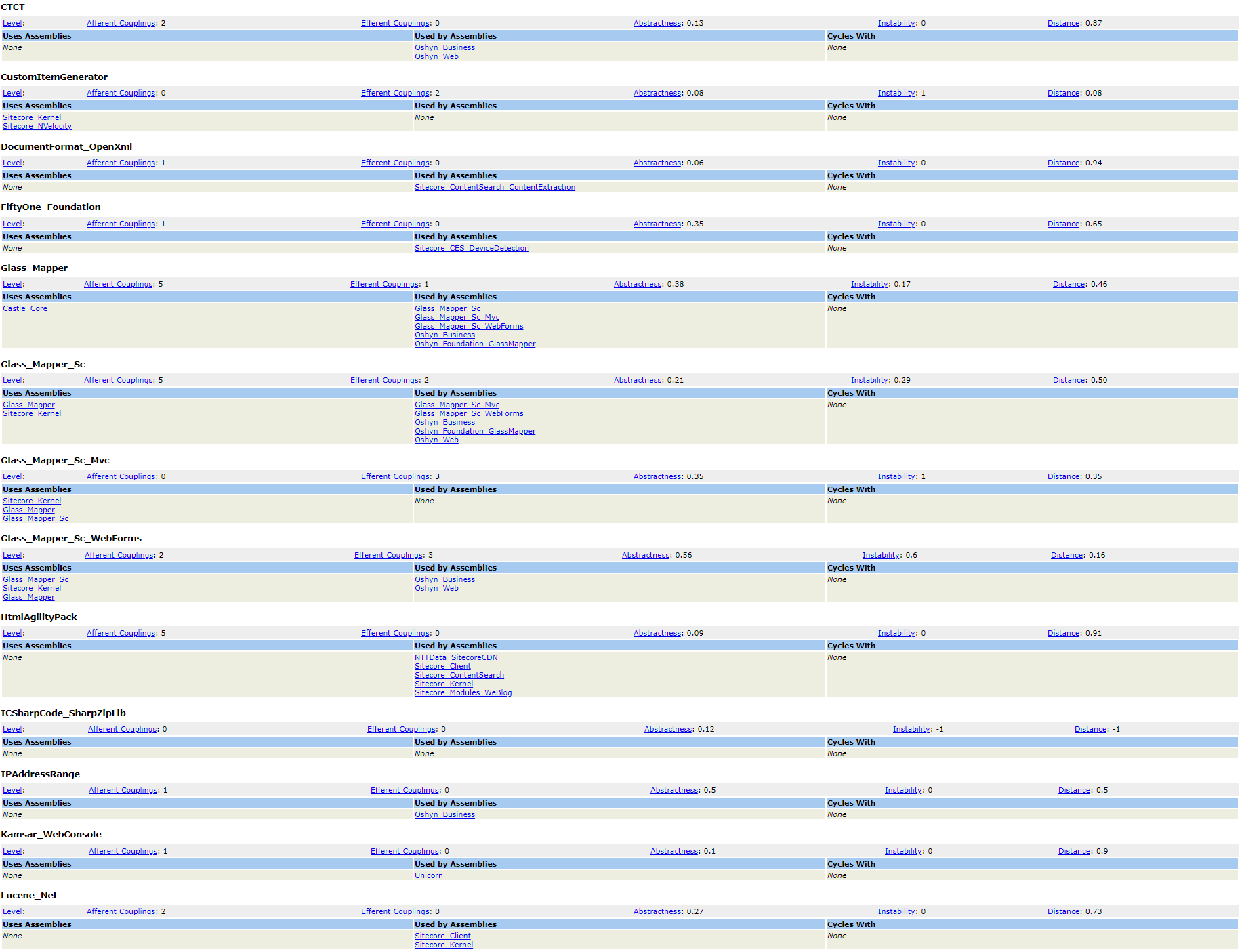

Assembly Analysis

Shows all assembly signatures in the solution, total classes, and reports assembly dependency in list form. (based on Assembly Analyzer from Kirk Knoernschild)

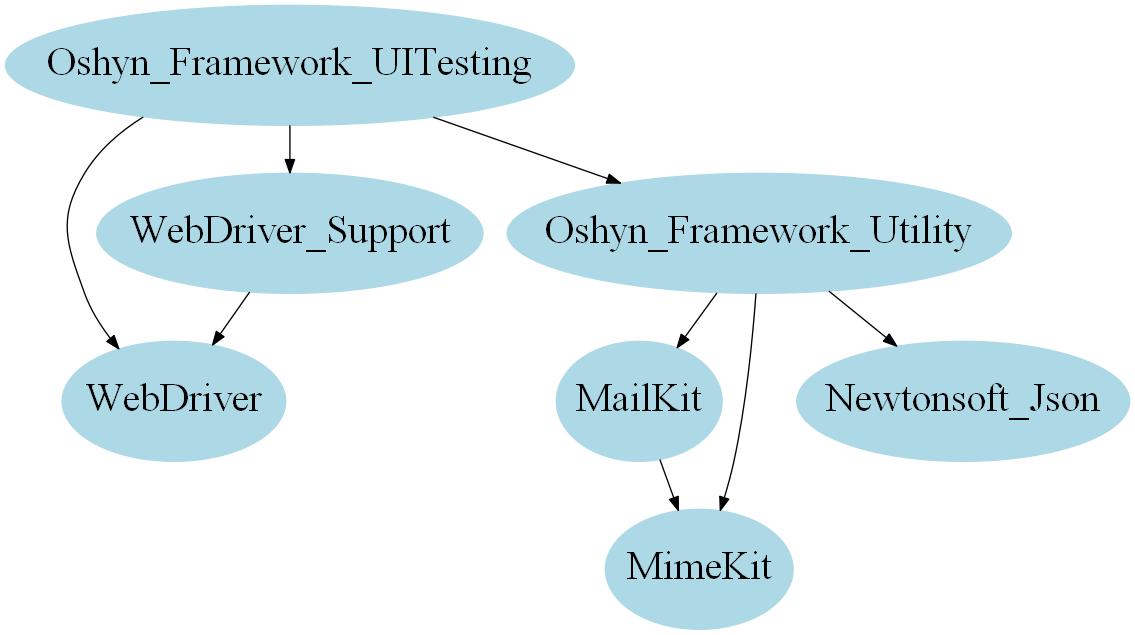

Assembly Dependency Visualization

Visually shows which assemblies are dependent on other assemblies.

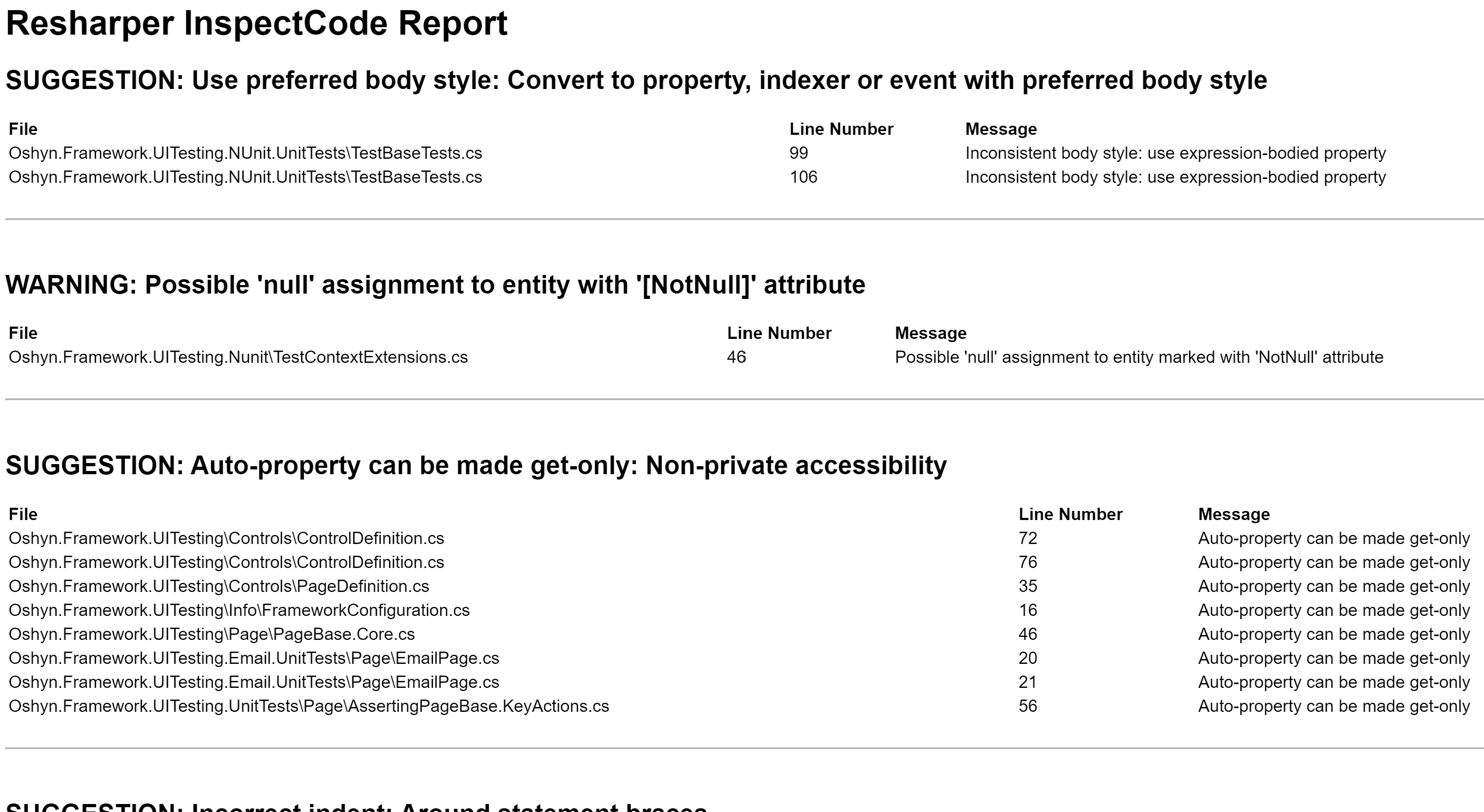

Code Style

Shows code adherence to standards defined by Oshyn generally, as well as incorporating additional standards provided by the customer. (based on Resharper InspectCode)

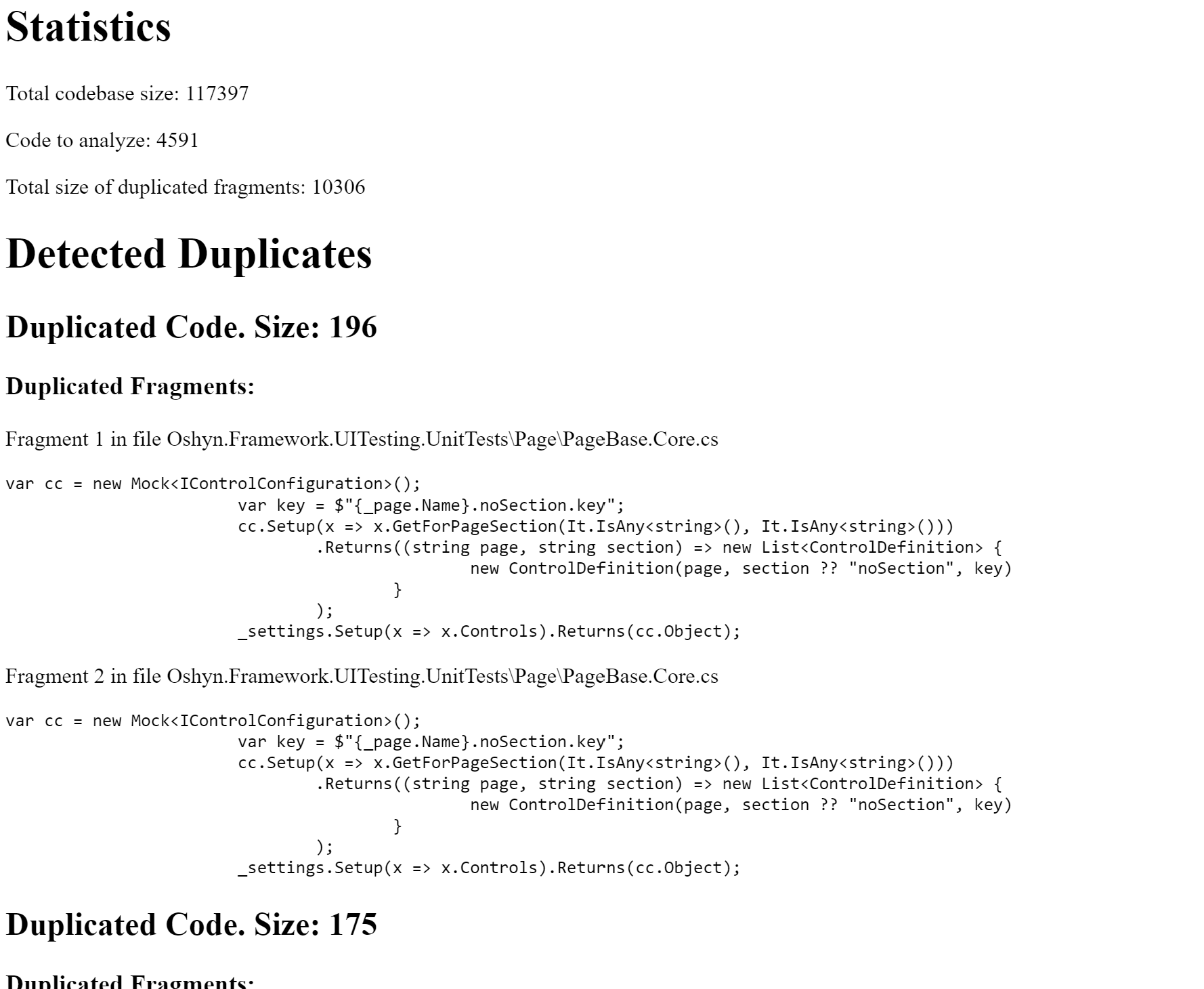

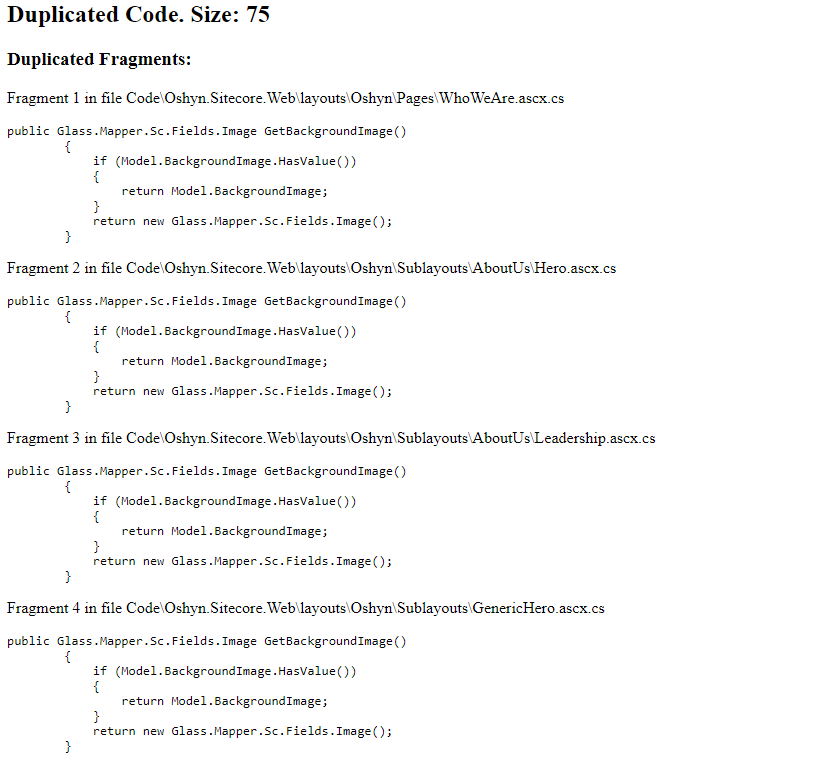

Code Duplication Checker

Checks code fragments for any duplication in code across the solution and flags it for removal. (based on Resharper dupFinder)

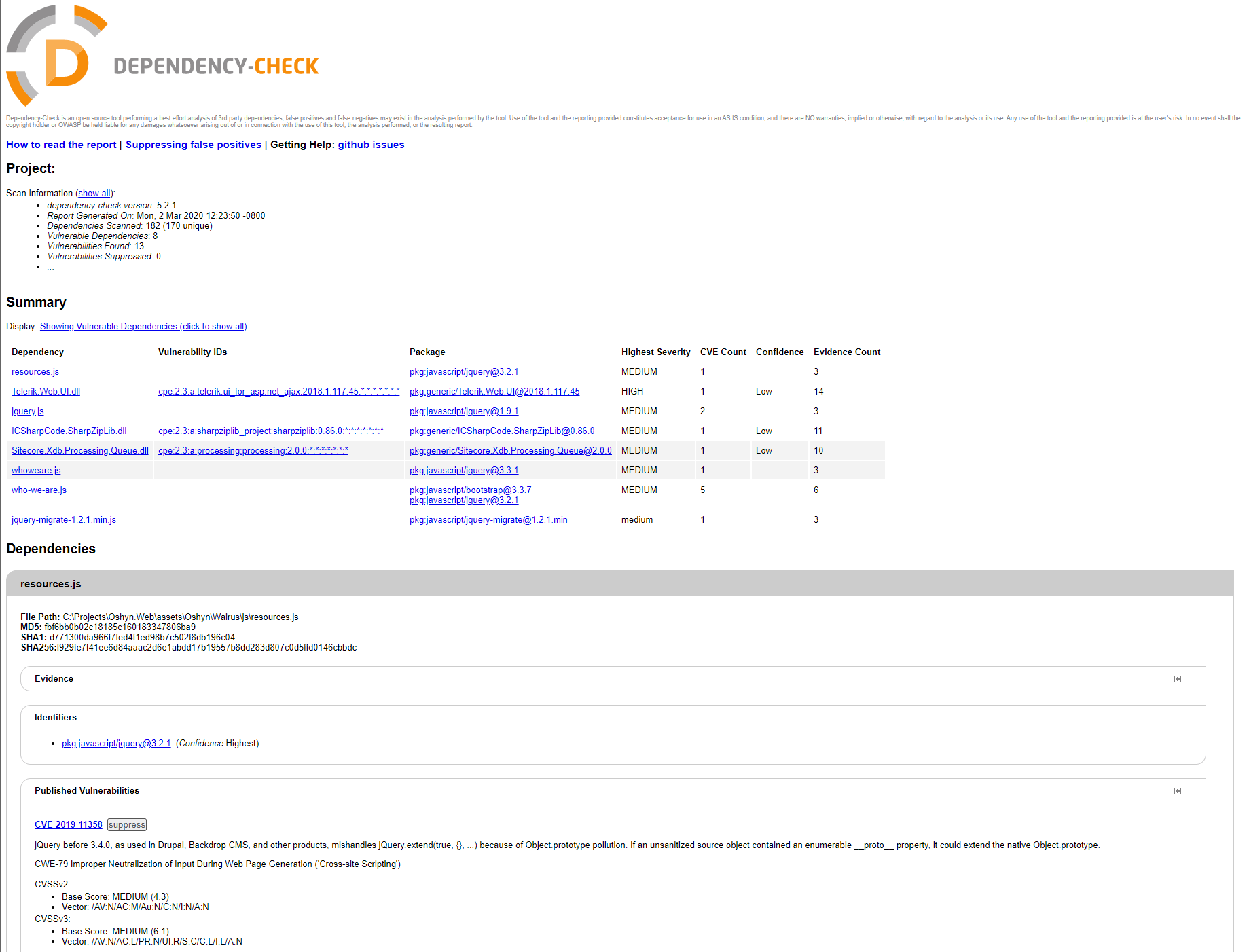

Dependency Vulnerability

Checks all assembly versions for known vulnerabilities in the NIST NDV database. (based on Dependency-Check from Jeremy Long)

Management-focused Reports

The following management-focused reports are created by our build process for every build:

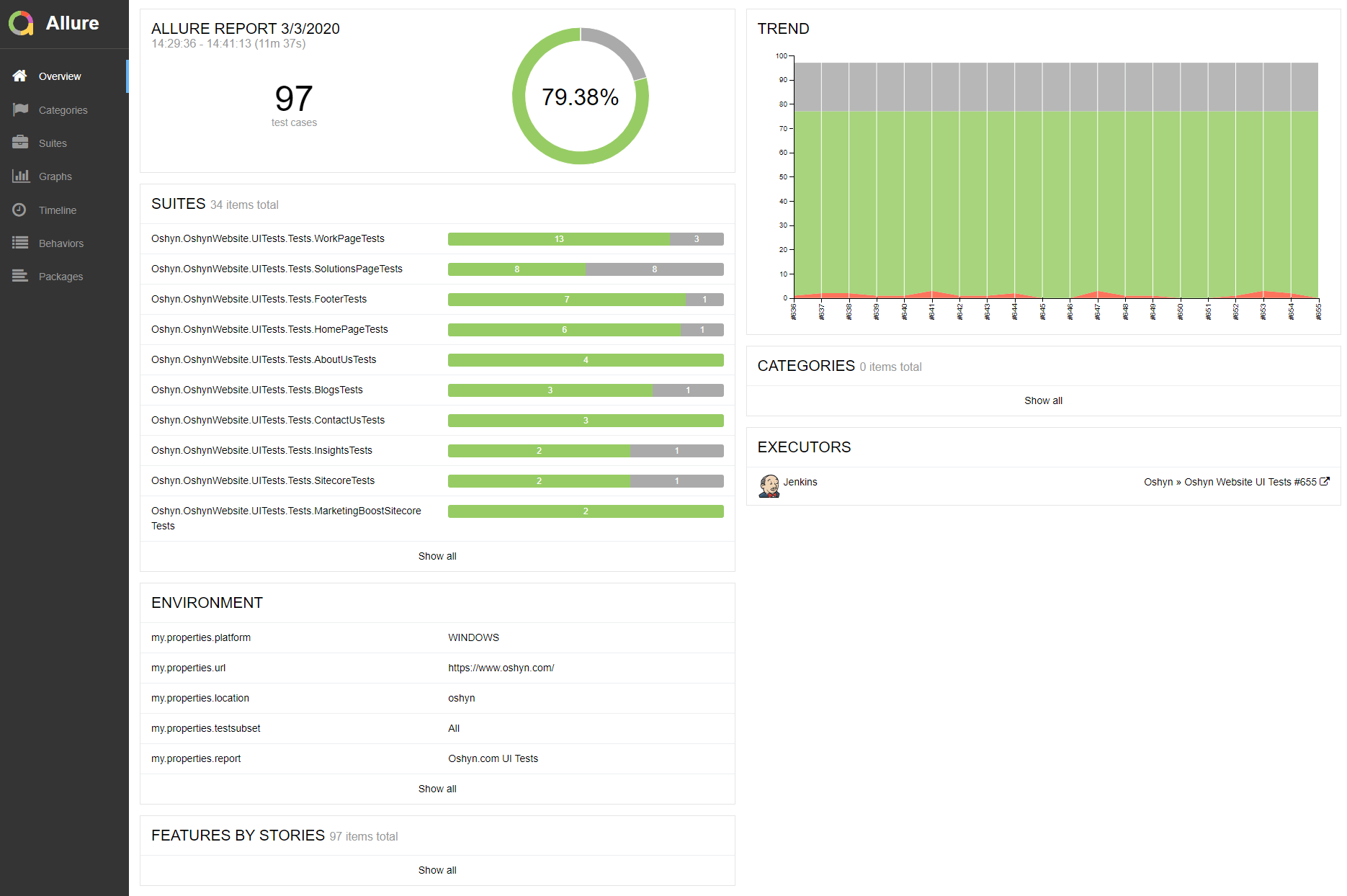

Unit Test Results

Shows number of unit tests passed, which categories/areas they belong to as well as the increase over time. (based on Allure Reports)

Code Coverage

Shows code coverage by area over time for the application. (based on Cobertura)

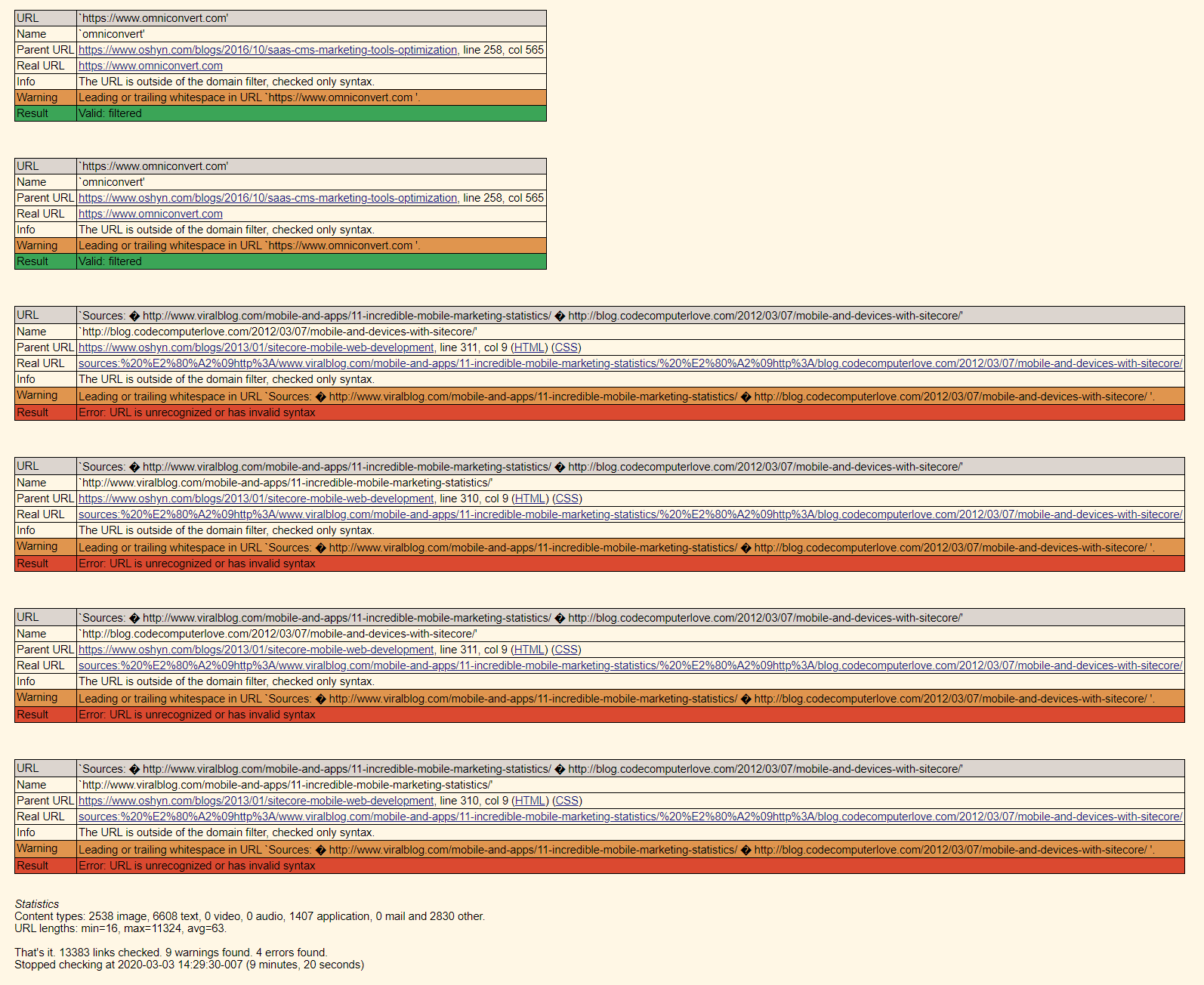

LinkChecker

Crawls the published site and reports missing pages (404s) discovered anywhere on the site. (based on LinkChecker from Bastian Keineidam)

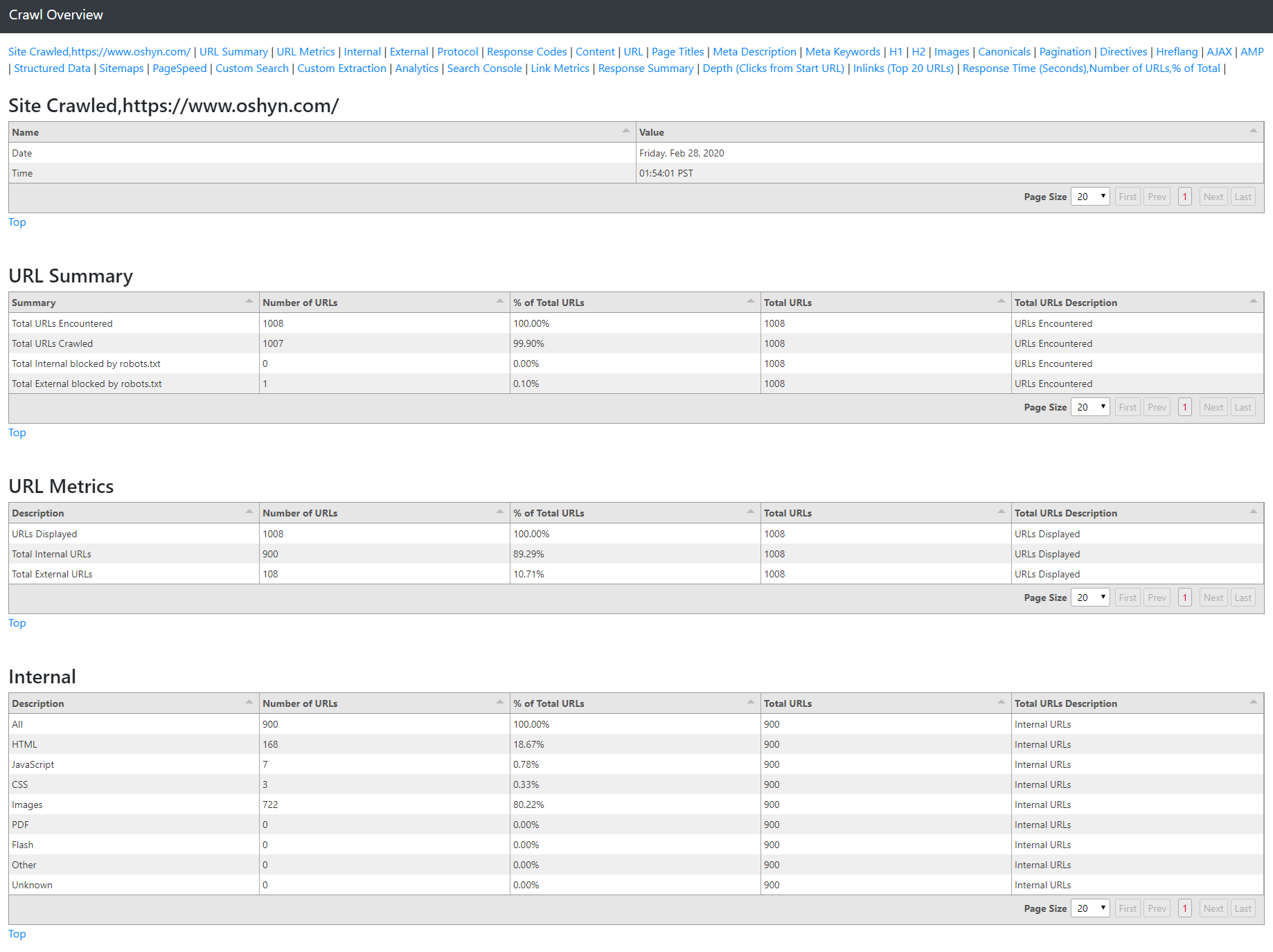

SEO Crawl Overview

Provides statistics on the crawled published website based on the industry-leading Screaming Frog SEO Spider tool.

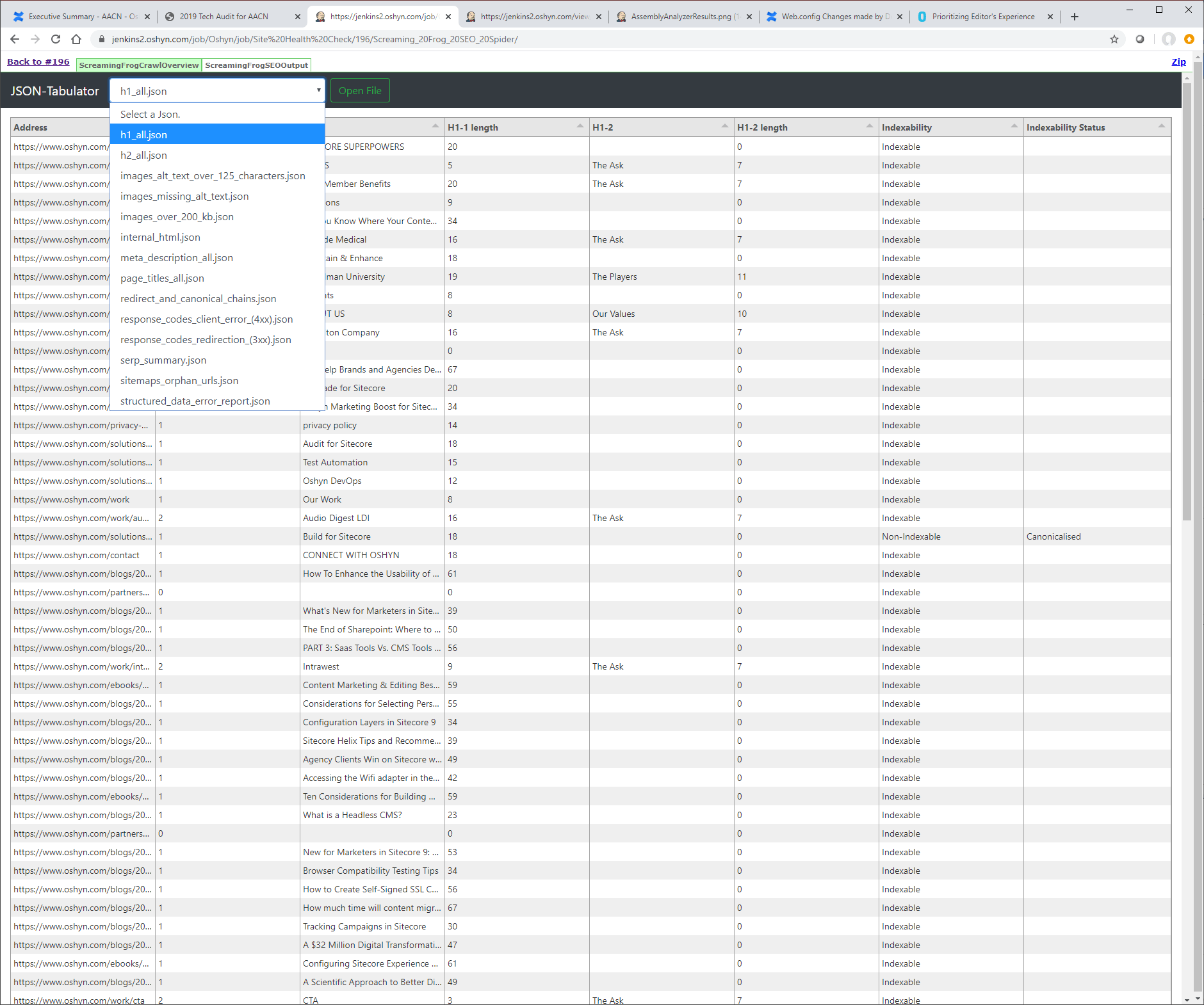

SEO Crawl Details

Detailed reports for various metrics useful for anyone who is optimizing the SEO of their site including H1 analysis and image analysis based on the industry-leading Screaming Frog SEO Spider tool.

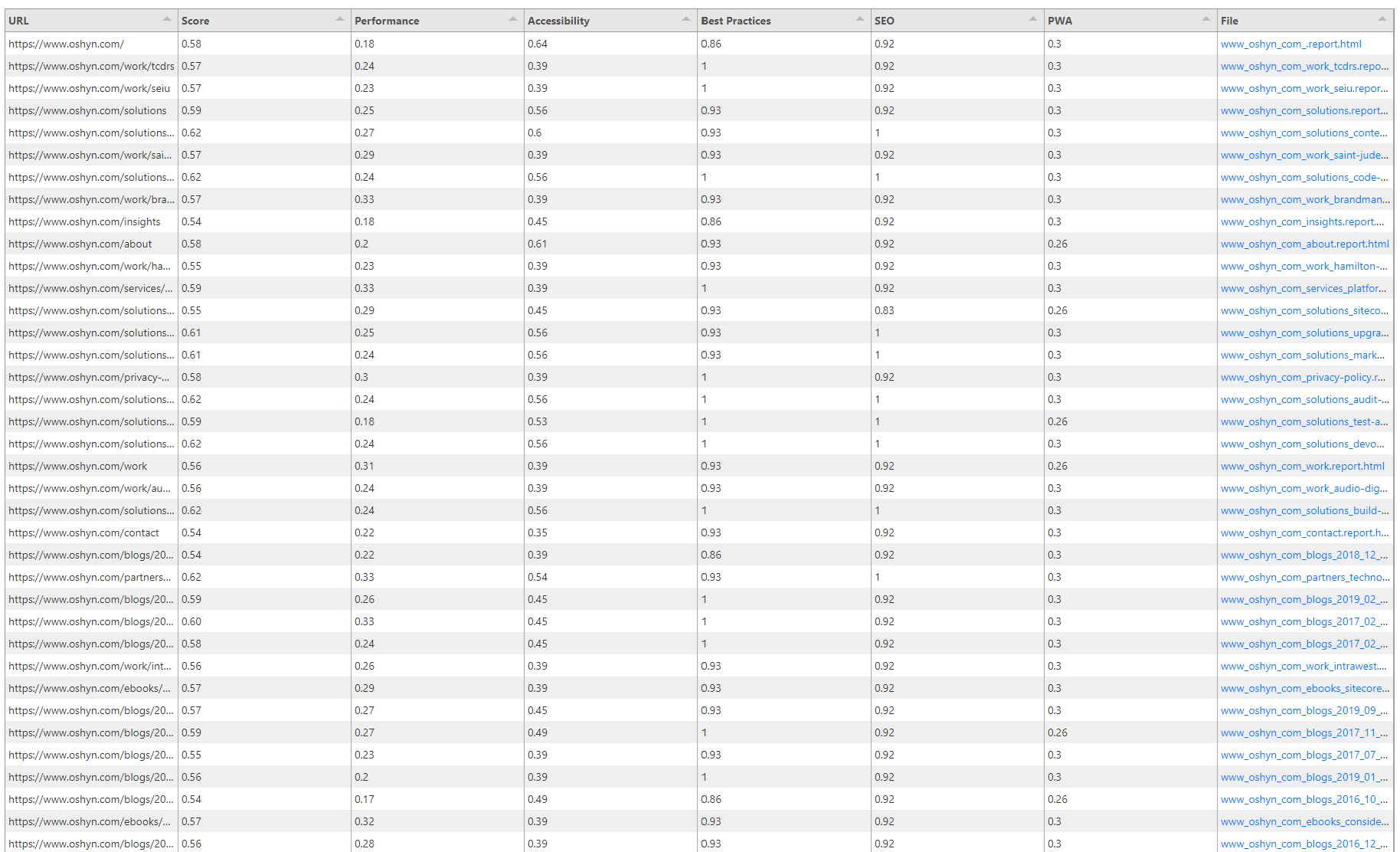

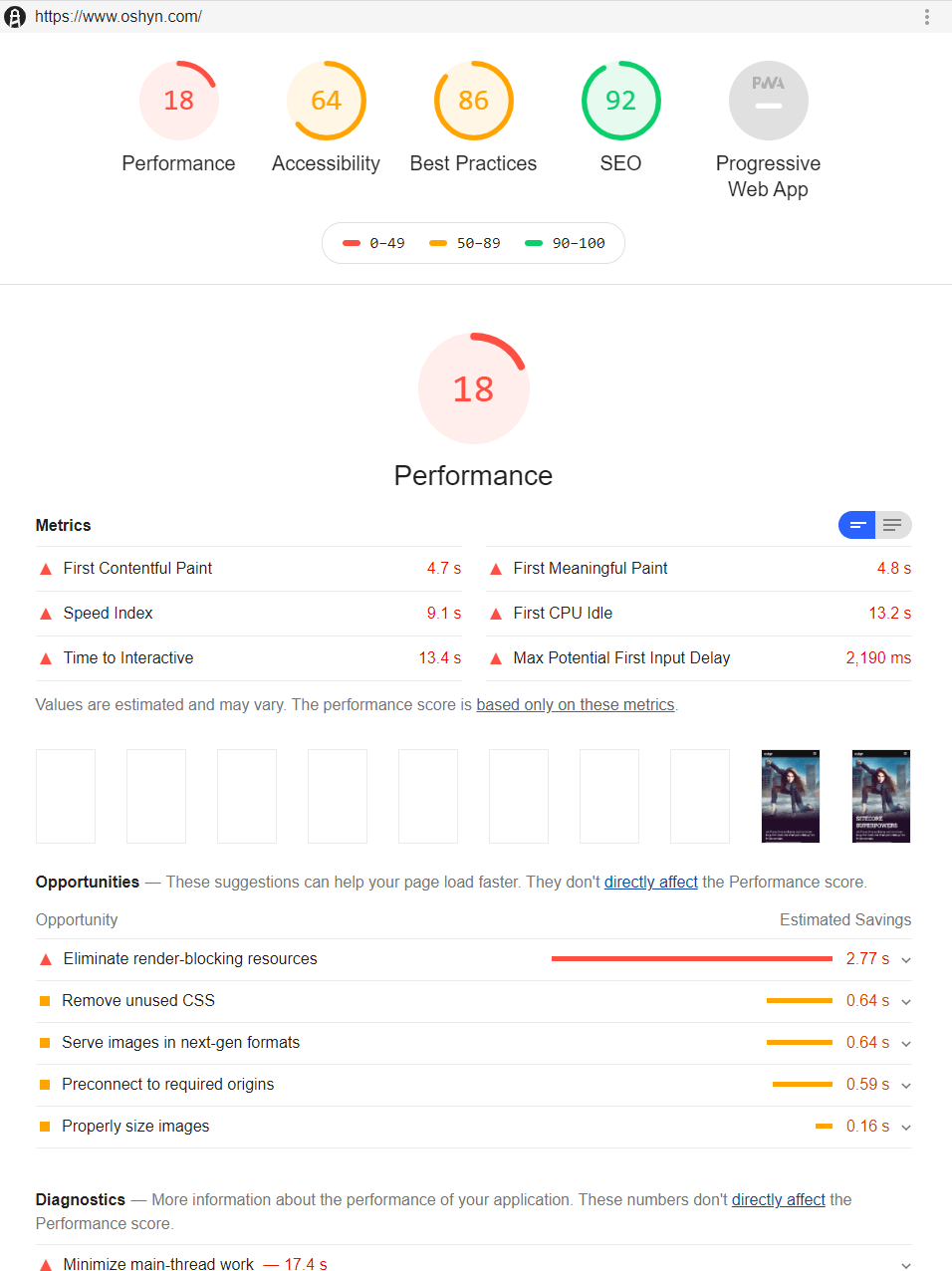

Lighthouse Reports

Reports showing page performance, accessibility, best practices and SEO for your entire website using Google’s Lighthouse.

All the reports described above are generated automatically. This gives developers and managers the ability to track progress over time and provides the piece of mind that any particular release has not degraded the experience of their website in any critical way: functionally, performance-wise, SEO-wise, and security-wise.