Browser Compatibility Testing Tips

Mar 01, 2012

When testing a site in different browsers, platforms, and devices, there are some details that QA testers may not take into account which can result in losing time due to retesting functionality or specific behaviors that they didn’t know would be different between environments.

Browser compatibility testing is a stage that needs special attention in the QA process. It is so important because results might be different depending on many variables (i.e. the platforms used, the time when tests are executed, the site’s sections tested, etc.)

1. What to test

There are many elements that will not change across browsers (like images size, fonts color, texts padding and pages background). However, there are many other elements that will need more attention:

- Font size and font style: some browsers overwrite these properties

- Special characters with HTML character encoding

- Controls alignment: bullets, radio buttons and checkboxes might not be correctly aligned

- Information submitted to the database: if there are forms that interact with the database, it is necessary to verify that the information is correctly stored

- HTML5 video format: users must be aware that depending on the player or plugin used, not all the browsers are able to play all the existing video formats. For example, Internet Explorer 9 will only play .mp4 videos and Firefox 9 will allow only .webm videos while Chrome will be more flexible (.mp4, .webm, .ogv and other video formats). This is an issue that should be taken care of by the development team and the QA team.

- Text alignment: some dropdown items will look good in Internet Explorer while in Safari they might appear too close to the upper margin.

- Plugins developed by external sites: some jQuery plugins might not work correctly, like print functionalities in IE8 or carousel rotation when playing videos.

- CMS compatibility: be sure to know the browsers that the Content Management System supports and focus mostly on that browser verses other ones.

2. When to test

As a best practice, it is useful to choose just one browser/platform and make all the testing on it during the development process. This browser should be the same as the one used by the development team. It is recommended that browser compatibility testing stage be executed at the end of the development process for two reasons:

- Most of the development process will be finished, including resolved bugs. Consequently, there’s a major probability that some issues will not be related to a specific browser but to the site itself.

- Work schedule is better structured. It will be easier to focus on testing a site using Chrome for Mac for 5 hours and then Internet Explorer 8 for Win7 for another 5 hours than to focus on testing one functionality in each platform every time a new module is released.

3. What browser and platform should be tested?

The browser and platform selection should be specified during the Requirements Gathering process, this way the whole development team, QA team, and client will be aware of which browsers will be used from the beginning of the software development process. This is the time in the process where you should do research on the most used browsers in order to make a suggestion on which ones should be tested.

Here are the most used browsers in 2011:

| 2011 | Internet Explorer | Firefox | Chrome | Safari | Opera |

|---|---|---|---|---|---|

| December | 20.2 % | 37.7 % | 34.6 % | 4.2 % | 2.5 % |

| November | 21.2 % | 38.1 % | 33.4 % | 4.2 % | 2.4 % |

| October | 21.7 % | 38.7 % | 32.3 % | 4.2 % | 2.4 % |

| September | 22.9 % | 39.7 % | 30.5 % | 4.0 % | 2.2 % |

| August | 22.4 % | 40.6 % | 30.3 % | 3.8 % | 2.3 % |

| July | 22.0 % | 42.0 % | 29.4 % | 3.6 % | 2.4 % |

| June | 23.2 % | 42.2 % | 27.9 % | 3.7 % | 2.4 % |

| May | 24.9 % | 42.4 % | 25.9 % | 4.0 % | 2.4 % |

| April | 24.3 % | 42.9 % | 25.6 % | 4.1 % | 2.6 % |

| March | 25.8 % | 42.2 % | 25.0 % | 4.0 % | 2.5 % |

| February | 26.5 % | 42.4 % | 24.1 % | 4.1 % | 2.5 % |

| January | 26.6 % | 42.8 % | 23.8 % | 4.0 % | 2.5 % |

Here are the most commonly used Operating Systems in 2011:

| 2011 | Win7 | Vista | Win2003 | WinXP | Linux | Mac | Mobile |

|---|---|---|---|---|---|---|---|

| December | 46.1% | 5.0% | 0.7% | 32.6% | 4.9% | 8.5% | 1.2% |

| November | 45.5% | 5.2% | 0.7% | 32.8% | 5.1% | 8.8% | 1.0% |

| October | 44.7% | 5.5% | 0.7% | 33.4% | 5.0% | 8.9% | 1.0% |

| September | 42.2% | 5.6% | 0.8% | 36.2% | 5.1% | 8.6% | 0.9% |

| August | 40.4% | 5.9% | 0.8% | 38.0% | 5.2% | 8.2% | 0.9% |

| July | 39.1% | 6.3% | 0.9% | 39.1% | 5.3% | 7.8% | 1.0% |

| June | 37.8% | 6.7% | 0.9% | 39.7% | 5.2% | 8.1% | 0.9% |

| May | 36.5% | 7.1% | 0.9% | 40.7% | 5.1% | 8.3% | 0.8% |

| April | 35.9% | 7.6% | 0.9% | 40.9% | 5.1% | 8.3% | 0.8% |

| March | 34.1% | 7.9% | 0.9% | 42.9% | 5.1% | 8.0% | 0.7% |

| February | 32.2% | 8.3% | 1.0% | 44.2% | 5.1% | 8.1% | 0.7% |

| January | 31.1% | 8.6% | 1.0% | 45.3% | 5.0% | 7.8% | 0.7% |

Information from w3schools.com

4. Set priorities

Depending on different aspects of the software process (like the number of developers working on the project and how much time is left on the project) there should be an analysis of the issues that are critical to the site versus the time needed to fix them and if they represent an obstacle to desired behaviors. This analysis is required to make sure critical issues are solved sooner than irrelevant bugs that might take more time to be fixed.

5. Oshyn’s experience

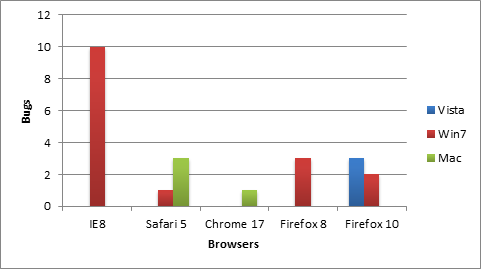

The following graph shows the results of a cross-browser compatibility tests on a recent Oshyn project. Google Chrome for Mac seems to be the browser with the lowest amount of bugs, while Internet Explorer 8 for Windows Vista had the highest amount of issues. It is important to note that Internet Explorer 9 has not been added to this chart because the testing of the site was made using this browser.

Cross-browser compatibility testing is a critical stage in the QA process. It helps find unnoticed errors and gives you a chance to add improvements to the final product.

References:

Related Insights

This site is protected by reCAPTCHA and the Google Privacy Policy and Terms of Service apply.